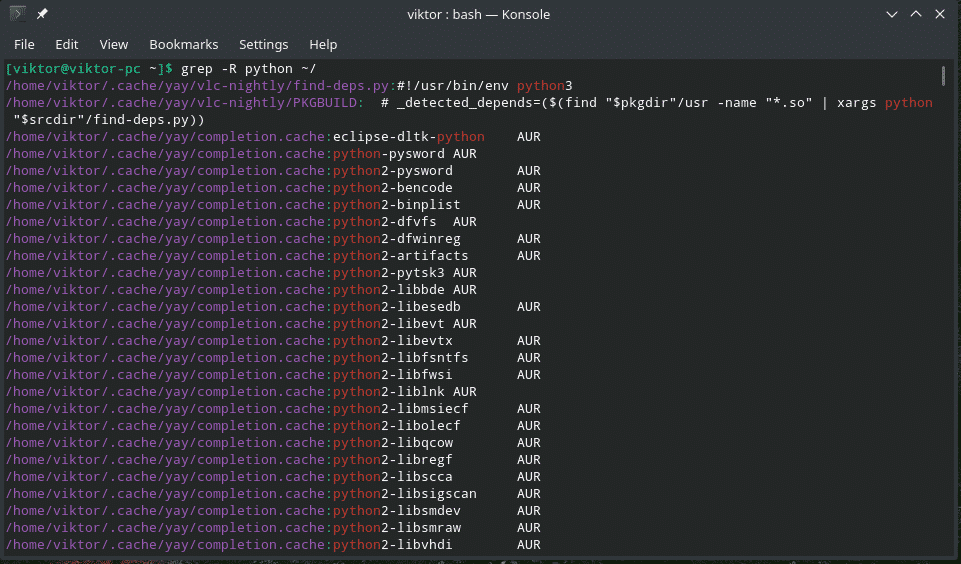

Running $ sort Test_2.txt > Test_2_sort_0.txt and then using grep | uniq on Test_2_sort_0.txt did almost return the expected output. Running $ grep '9791' Test_grep_uniq_sort.txt | uniq -c gave this result 1 AB-9791_Fooġ DE-9791_Bar // expected: 4 actual: 1, 2, 1ģ AB-9791_Foo // expected: 4 actual: 1, 3 But I am having trouble sorting the file and removing the repeated lines.

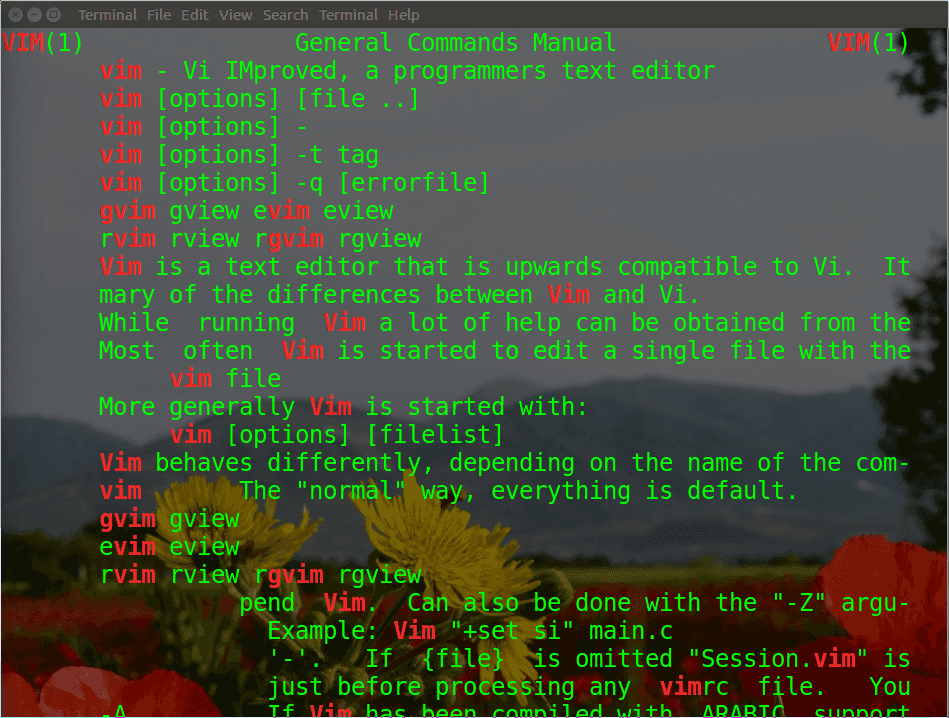

It seems fairly straight forward and I understand how to do most of it. I copy und pasted each AB-9791_Foo line so they should be identical. We are supposed to use grep, along with a regular expression and other command-line tools to generate a list of unique miRNA names in the human genome. Here is an example: echo localhost > localhosts grep -v -f localhosts /etc/hosts 127.0.1. In my test file Test_2.txt these lines are written AB-9791_Foo 3 Answers Sorted by: 8 You could accomplish this with grep.

What tool is usefull ( grep, awk, sed, or other) to achieve the second part to group and count? Update with test records This answer uses perl but perl is not installed on our machines. This result should be grouped and counted (like SQL Group by Count) like this AB-9791_Foo 2 paulRHEL4b pipes cat tennis.txt Amelie Mauresmo, Fra. We can also use the sed command to print unique occurrence of a pattern. The most common use of grep is to filter lines of text containing (or not containing) a certain string. The m option can be used to display the number of matching lines. Using $ grep "9791" myFile.txt gives this result AB-9791_Foo Pipe the output of grep command to head command to get the first line. I want to filter all lines from a file that contain mySearchString and after that group them together and count them.Įxample find all lines that contain 9791 AB-9791_Foo

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed